How AI Infra's "Messy Middle" technologies found its stride

Opportunity in AI’s “Messy Middle” Infrastructure

2025 was the year of AI’s “Messy Middle”. The ecosystem of tools that lies between models and core infrastructure on one side and end-user applications on the other is emerging as a key driver of innovation and enterprise value. As AI deployments and ROI take center stage, the tooling, platforms, and services that turn AI from concept into production at scale become crucial. Startups in this space have historically faced tough odds: the market wasn’t really there, scaling was difficult and sustained business value was elusive. Now, the landscape is shifting. With new market momentum and notable exits, this part of the stack is firmly on the (market) map.

The Messy Middle’s evolution from MLOps to LLMOps and now AgentOps is expanding opportunities for founders and investors. The industry is converging on the need for mature, reliable infrastructure, and a surge of acquisitions signals just how essential this layer has become.

The Early Days: MLOps (2016–2021)

Data Science and classical Machine Learning began gaining popularity as a discipline in the mid-2000s, driven by the rise of big data and web-scale companies. By the early 2010s, many enterprises adopted classical ML techniques for use cases such as forecasting, personalization, fraud detection, and optimization. The research breakthroughs in Deep Learning in the early 2010s (e.g. AlexNet in 2012), followed by hyperscalers successfully adopting these architectures (e.g. Google and Facebook’s ad targeting models), put a much sharper focus on the role of data science and ML in an organization. Deep Learning promised to take things to the next level - data became larger and more heterogeneous (including unstructured), models increased in size, and compute infrastructure became a lot more important. As a result, enterprises began to consider making the process of training/operationalizing models a lot more systematic.

A new category of software tools, “DevOps for ML” emerged, appropriately termed “MLOps” - tools and software needed to build, deploy, scale, manage and improve the evolving AI systems. Plenty of MLOps startups emerged in response. However, very few were able to build real businesses because they faced two core challenges:

- Challenging ROI: ROI for large scale predictive ML and even more so for computer vision, or early NLP was very hard to measure for any org other than a few. A fraction of a percent improvement in a recommendation model for Facebook could map to $100M+ in revenue gained. For the average enterprise, use cases and ROI were a lot murkier.

- Limited Production Deployment: Few machine learning models moved past R&D prototypes. It wasn’t just operational challenges that MLOps promised to solve but training a model for each use case was hard and costly and few firms had the internal ML expertise to execute on proprietary model development. The bottleneck was in building these models, and budgets went into data teams, rather than software tooling.

Outside of a few companies like Weights & Biases, few venture-backed startups were able to build large businesses (>$10M ARR). Arguably, the only sector of the market that flourished were the platforms supporting traditional data science and classical ML e.g. the Databricks ecosystem or data science platforms such as DataRobot, Dataiku and Domino Data Labs. This ecosystem got a lift from the new demand for AI. Most of the exits from this era were for team and technology, and the ones that sold for >$200M were handful (e.g. DeePhi/Xilinx, Apple/Lattice Data, Apple/Xnor, Apple/Turi).

The LLM Transformation (2022–2025)

The rise of Large Language Models changed everything. Accessible LLMs brought advanced AI within reach for a broader set of developers. The market for those building and deploying AI applications expanded as the target persona shifted to software engineers rather than the smaller universe of Data Scientists/ML engineers. Teams could now build production AI applications at speeds previously unheard of.

Now, with real AI applications being built and shipped, there finally came a need for a specialist “LLMOps” layer to operationalize these applications and run them at scale. There also emerged budget for these tools, as well as a strong impetus to move applications to production and start showing ROI.

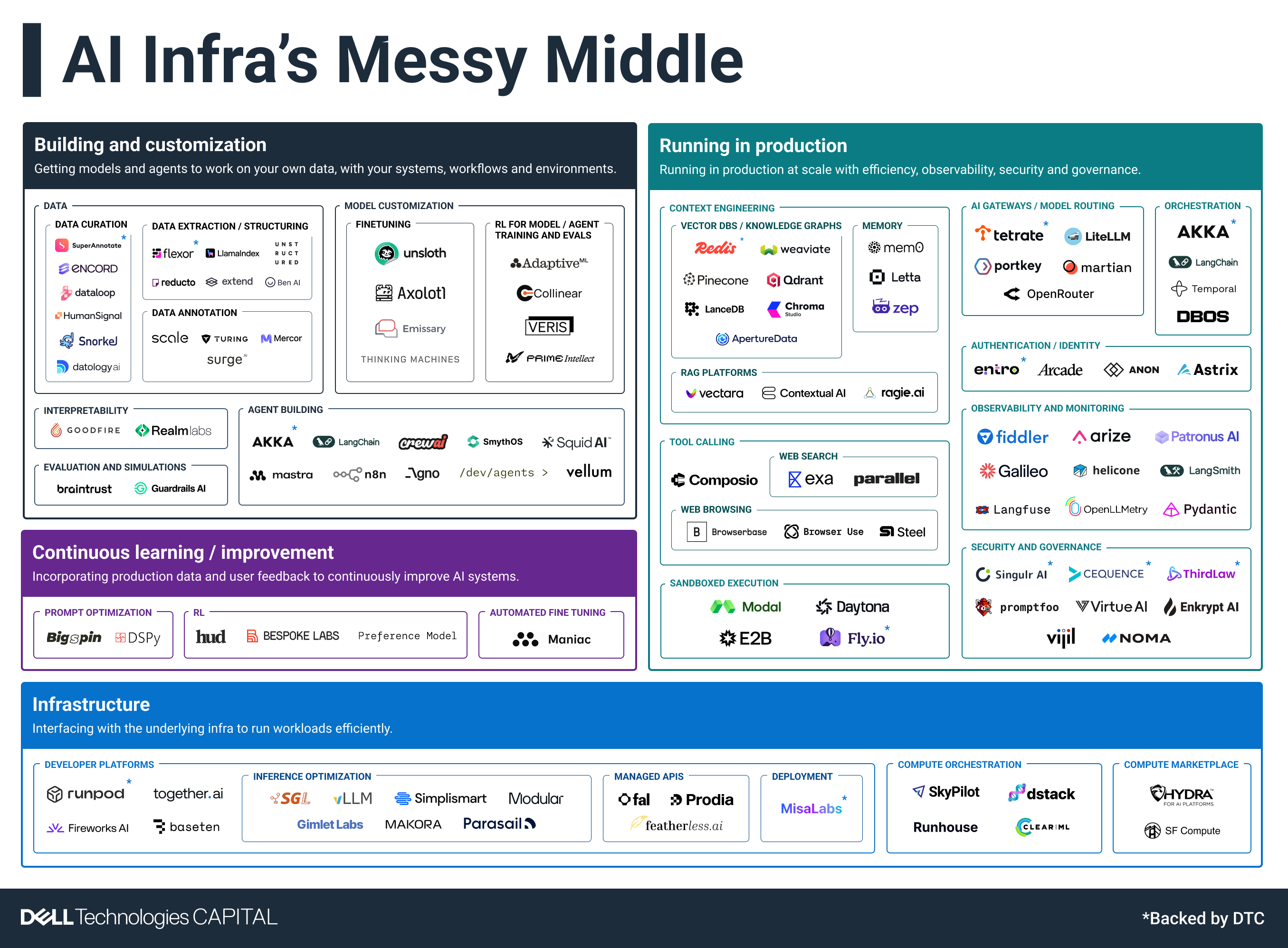

The first iteration of LLMOps were focused on putting guardrails around LLMs, feeding them the right prompts through techniques such as RAG, evaluating and observing the outputs and securing models and prompts. As AI models advanced, the toolchain moved quickly as well. Practitioners shifted from manually fiddling with prompts to more mathematical prompt optimization approaches like DSPy. RAG evolved into context engineering. Fine-tuning showed success in some use cases. As LLM usage scaled, cost containment became more important. Practitioners began looking for ways to reduce costs by routing queries to lower cost or lower latency smaller models; model routers and AI gateways proliferated. Applications moved into multi-model systems and included new data modalities across text, image and videos. As systems got more complex, stronger observability tools emerged to ensure applications were behaving as expected.

Early movers who persevered through the pre-LLM market cycles finally gained momentum, while new post-LLM startups positioned themselves for the next wave of demand.

Market Validation Through M&A

Recent acquisitions demonstrate the shift from “nice to have” to necessity for the messy middle tech. The pace at which core infrastructure startups are being acquired as strategic assets is increasing and has generated an estimated $10B+ in returns just in the last three years. Notable deals include:

.png)

These deals represent lasting value - proof that this layer is producing businesses with staying power beyond any single product cycle.

The Opportunity Ahead – LLMOps -> AgentOps

With agent development accelerating, operational challenges have expanded further – e.g. building reliable agents, orchestrating across them, monitoring their behavior. Agents are a completely new workload that bring new challenges such as orchestrating multiple models, managing memory, enabling and managing tool use and communicating with other agents. These needs are now central in discussions about effective AI infrastructure and have created opportunities for early-stage companies to build viable businesses. Achieving AI at scale isn’t going to happen without this infrastructure layer.

The stack is being defined as we speak, and opportunities lie across the agent lifecycle:

- Building - enabling enterprises to build agents that leverage their data and embed into their respective workflows, systems and processes.

- Translating from business processes to AI agent workflows

- Enabling both technical and business users to build reliable agents

- Connecting with enterprise data – not just for retrieval but also for taking actions on it.

- Providing agents a diverse toolset to use such as API access to enterprise systems, browser/computer use for systems not accessible through APIs, code sandboxes

- Running at scale – running these systems reliably and cost effectively with observability, security and governance.

- Managing context, memory and tool use for swarms of agents working collaboratively to achieve tasks

- Ensuring appropriate failovers if an agent fails

- If every user in an enterprise is “vibe building” agents, observability, auditability, governance, authentication, authorization, sandboxing AI generated code all become crucial

- Continuous learning – adding feedback loops to enable agents to continuously improve. This is one of the most interesting future developments. We’re moving towards a world with autonomous AI agents that adapt, learn and continuously improve over time to reliably perform long-horizon tasks, similar to human employees. While foundation models will continue to improve in general reasoning capabilities, there will be emerging areas where startups can fill a gap in the Messy Middle.

- RL environments, gyms and data that replicate an enterprise and teach agents how to navigate each enterprise’s complex environments and tools

- Prompts, verifiers and rubrics to evaluate agent performance in a given environment

- Agent improvement through real traces as tasks are executed and human feedback on each task is gathered

Interestingly, we’re already seeing initial consolidation between categories e.g. evaluations, simulations, data feedback loops and RL are all converging into a single testing, evaluation and continuous improvement solution. The startups that are pushing through are finding and executing on a high-value first wedge and then are quickly expanding to adjacent areas.

The Path Forward

The AI infra startups that have had breakout success didn’t sell into enterprises, but rather to the frontier labs or AI-native startups. Enterprises primarily were still buying pre-packaged applications and experimenting with infrastructure and SW tooling. The Messy Middle began to change that in 2025. Acquisitions by the large platforms recognizes their belief in this part of the stack too. It’s a key enabler in allowing enterprises to graduate from buying packaged AI solutions to building their own AI applications and agents for more complex workflows. As the industry moves from standalone LLM calls to complex, multi-agent systems, this layer will help define reliability, safety, cost, governance, and ultimately business outcomes.

The progression from MLOps to LLMOps and now AgentOps shows just how quickly operational requirements are evolving. GPUs and foundation models matter, but they are no longer the full story. The opportunity now sits in the frameworks, orchestration engines, evaluation systems, security layers, and continuous-learning loops that make AI production-grade.

However, it’s clear there will also have to be a ton of consolidation. Will there be large standalone companies? Seems like it, though the winners are far from evident – 2026 will be a crucial year that determines who starts to break away from the pack and begin to build massive platforms.

DTC leads early-stage investments in the companies and teams that are building what’s next in enterprise technology. We invest early, stay committed, and offer unparalleled support in helping you land enterprise-scale sales. If that sounds interesting to you, let’s connect.

Radhika Malik is a partner at Dell Technologies Capital where she invests in across the enterprise stack focusing on AI/ML, cloud infrastructure, and deep tech. Her DTC investments include Cartesia, RunPod, Savant, and several companies still in stealth.

.png)

.png)

.png)